ABOUT IBM NLU

IBM Natural Language Understanding (NLU) can be used to analyze the semantic features of text such as the content of web pages, raw HTML and text documents. NLU also has the ability to analyze target phrases in context of the surrounding text for focused sentiment and emotion results.

The semantic features that can be extracted from URLs, raw HTML and text using NLU include:

IBM Natural Language Understanding (NLU) can be used to analyze the semantic features of text such as the content of web pages, raw HTML and text documents. NLU also has the ability to analyze target phrases in context of the surrounding text for focused sentiment and emotion results.

The semantic features that can be extracted from URLs, raw HTML and text using NLU include:

- Categories

- Concepts

- Emotion

- Entities

- Keywords

- Metadata

- Relations

- Semantic Roles

- Sentiment

GETTING STARTED

The first step would be to sign up for a free IBM Cloud account and log in. Once logged in to IBM Cloud, create an instance of the Natural Language Understanding service. The next important step would be to copy the credentials to authenticate to the service instance you just created. The manage page shows the credentials such as the API Key and the URL values. These values will be needed for writing python code using Natural Language Understanding in IBM Watson Studio.

The first step would be to sign up for a free IBM Cloud account and log in. Once logged in to IBM Cloud, create an instance of the Natural Language Understanding service. The next important step would be to copy the credentials to authenticate to the service instance you just created. The manage page shows the credentials such as the API Key and the URL values. These values will be needed for writing python code using Natural Language Understanding in IBM Watson Studio.

USING NLU IN WATSON STUDIO TO WRITE PYTHON CODE

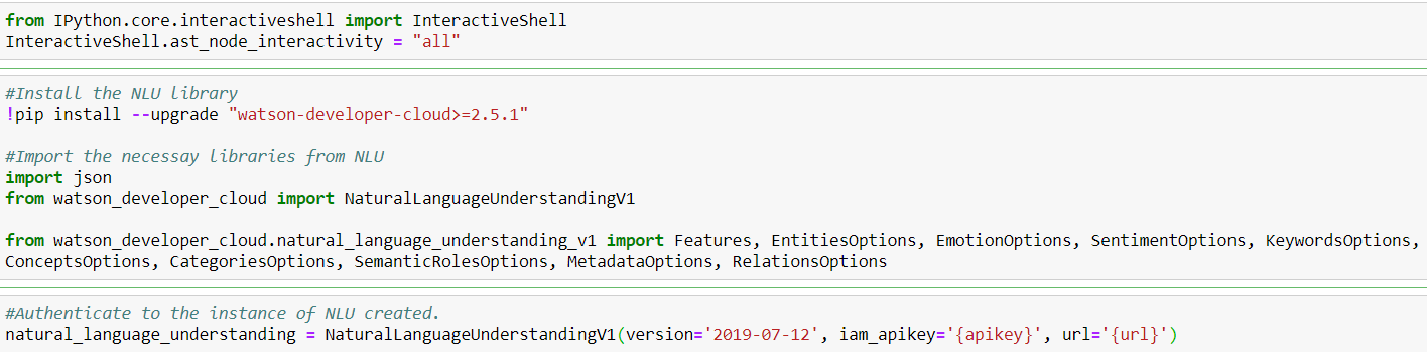

Once an instance of NLU has been created, the API Key and URL credentials can be used in a jupyter notebook in Watson Studio to write python code that extracts semantic features from web pages, text documents and raw HTML. The following code examples provide a clear illustration of how this can be achieved.

Once an instance of NLU has been created, the API Key and URL credentials can be used in a jupyter notebook in Watson Studio to write python code that extracts semantic features from web pages, text documents and raw HTML. The following code examples provide a clear illustration of how this can be achieved.

CONNECT TO NLU IN WATSON STUDIO

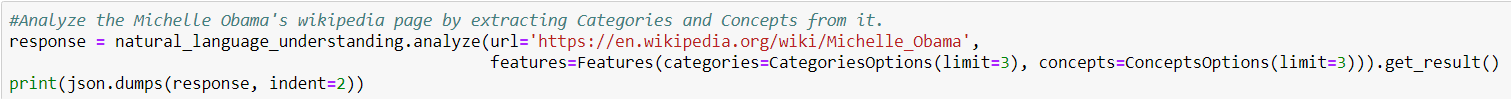

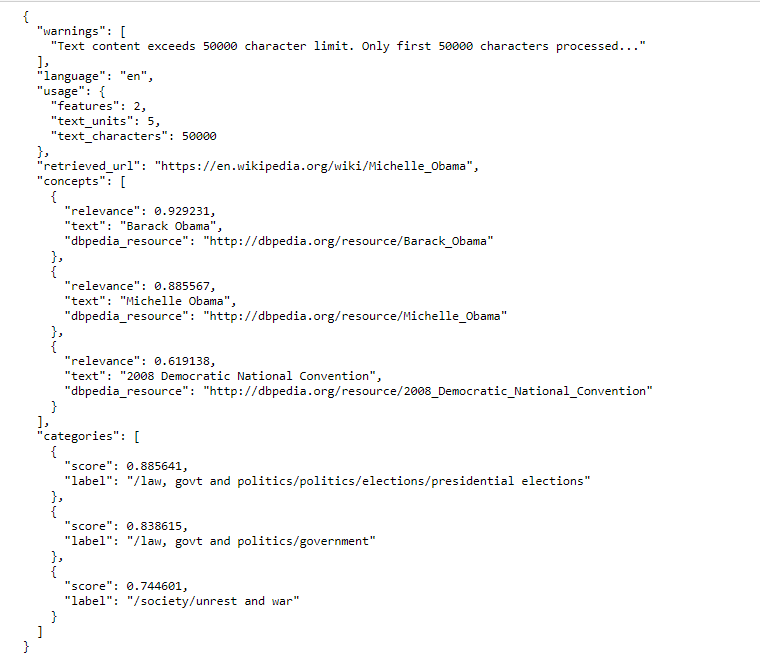

ANALYZE WEBPAGES - Extract Concepts and Categories

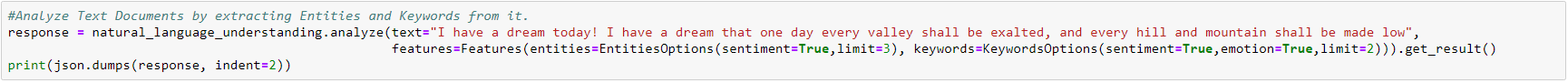

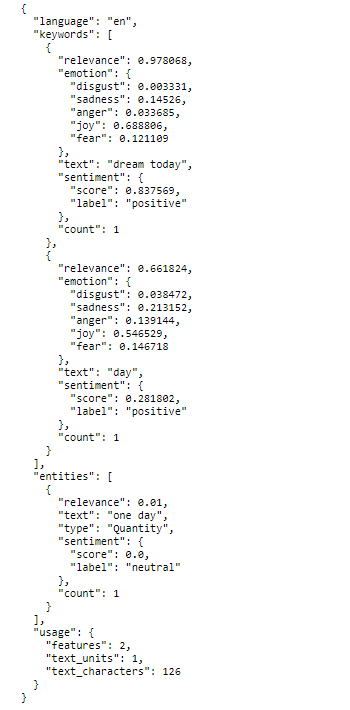

ANALYZE TEXT DOCUMENTS - Extract Keywords and Entities

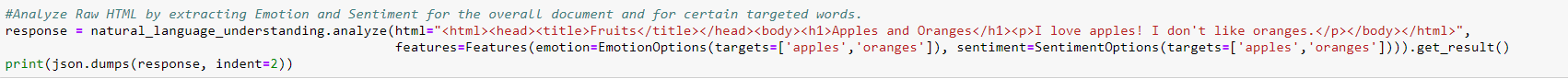

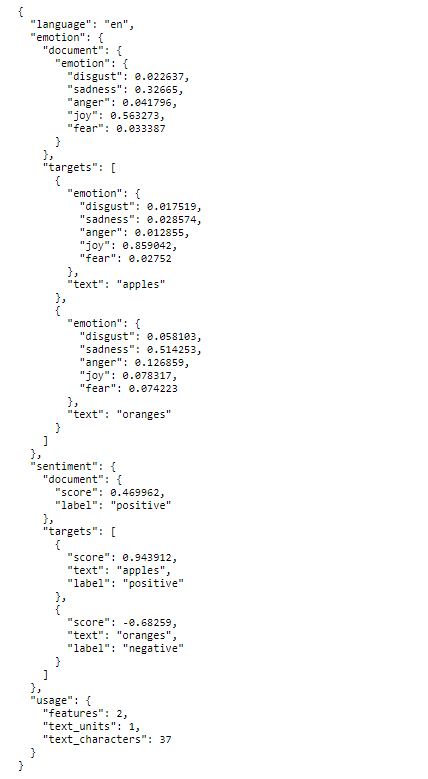

ANALYZE RAW HTML - Extract Emotion and Sentiment

CONCLUSION:

NLU is a very versatile tool. In addition to extracting the above specified semantic features from text, html and web pages, it can also be used with Watson Knowledge Studio (WKS) to extract user defined entities and relations. A model built in WKS can be deployed to NLU to extract Entities and Relations that are related to a certain topic or genre.

NLU is a very versatile tool. In addition to extracting the above specified semantic features from text, html and web pages, it can also be used with Watson Knowledge Studio (WKS) to extract user defined entities and relations. A model built in WKS can be deployed to NLU to extract Entities and Relations that are related to a certain topic or genre.

RSS Feed

RSS Feed